Recursive thinking, Newcomb's problem, and free will

How the way we think locks us to the way we think

Do you think the joke I’m about to tell you is funny? How can you conclude that if you haven’t even heard the punchline? The punchline is “you must first understand recursion”. Can you tell if it’s funny now? I bet you want the premise: “to understand recursion”.

To understand recursion you must first understand recursion.

Humans are not particularly fond of recursive thinking, but even though computers are much better at it, recursive code tends to waste more resources than the alternatives. So aversion to recursivity isn’t exclusive to humans.

However, it is my contention that certain ideas in philosophy, physics, mathematics, and other fields, require to think deeply in recursive terms. And these ideas are not some exotic hypothetical experiments, they affect every waking moment of everyday life.

I’m going to argue that the way you react to everyday situations depends on your view of free will. Your view of free will depends on how you believe you make choices. The way you believe you make choices affects the choice you make in Newcomb’s problem, which turns out to depend on the same assumptions about free will you started with.

Did I mention I think recursive thinking helps navigate these ideas?

Not so fast

Let’s consider a self-referential puzzle.

If you choose an answer to this question at random, what is the chance that you will be correct?

Initially you may think the chances of selecting an answer at random is 25%, so A) is correct, but so is D), so you have a 50% chance of selecting a “correct” answer, but now those answers are incorrect since the chance is not 25%.

If you do the same for every possible answer it turns out there’s no correct answer. Some people think this is a paradox, but it’s not. It’s just not trivial for most people to think in recursive terms.

Let’s consider another set of answers:

In this case there’s only one answer with 25%, so the chances you’ll choose that randomly remains 25%. In this case there is a correct answer: A).

Just because the answers of a problem are self-referential doesn’t mean the problem is a paradox.

If you really want a challenge, try this:

Still not a paradox, just challenging for most humans to conceive.

Let’s keep thinking in recursive terms.

The universe doesn’t take shortcuts

Consider the following grid representing a cellular automaton:

Each row is computed using the bits of the previous row, following a fixed rule. Focus on the first activated bit in the row 1. The three cells above it are 001, and the fixed rule says in that case the next bit should be 1. That’s how every bit in row 2 is determined.

These are the rules:

Computing row 4 is trivial based on the contents of row 2, but you need to compute row 3 first. You may think there has to be a shortcut, but it turns out there isn’t. Go ahead and try to compute row 4 without row 3.

This is the main surprising finding of Rule 30: even simple deterministic rules can lead to unpredictable outcomes. If you want to know the state of row 1000, you need the full state of row 999, for which you need row 998, and so on. Stephen Wolfram calls this inability to take shortcuts computational irreducibility. So even in a completely deterministic universe, if it’s computationally irreducible, to know precisely what’s going to happen one year into the future, you would need to simulate every tick of the entire universe, and by the time you manage to do that, one year would have already passed, making the attempt to predict the future computationally self-defeating.

This has a striking implication: if the physical processes underlying your decisions are computationally irreducible, then no one — not you, not a demon with complete knowledge of physics, not even a god — can know what you will decide without simulating the entire causal chain leading up to the decision. The answer doesn’t exist anywhere in the universe before the computation completes.

It’s turtles all the way down.

The orphan cause

Can you think your next thought before you think it? Trying to think on these terms feels like trying to lift your body by lifting your bootstraps.

Suppose I ask you to choose between heads or tails. Was that choice independent of the state of the universe (in other words it came from you and only you)? Or was your choice a result of prior causes?

Let’s say you chose heads, and then we rewind the state of the universe to the moment before I asked you to choose. Is it possible for you to chose anything other than heads?

This is a tricky self-referential problem, because if it’s the case that you could not have chosen anything else, then whatever you believe is possible, is the only thing you could believe.

I must believe in free will, I have no choice.

Let’s try to unpack this. If Adam believes he has an ability to choose otherwise (he chose heads, but he could have chosen tails), but he does not have such ability, then he is doomed to believe something that is false, but it’s not his fault. He is stuck in a vicious loop, and there’s nothing he can do about it.

Someone who believes in free will might argue they are not like Adam, because they can always choose otherwise in any given moment. But that is assuming a very big if.

Free will

I’m not going to attempt to solve the deep philosophical problem of libertarian free will here, but fortunately I don’t need to, all I need to do is demonstrate that it’s plausible that it doesn’t exist.

The lazy thing to do is fall back on the burden of proof: he who makes the claim has the burden of proof (onus probandi actori incumbit). If you make the claim that free will does exist, then you have the burden of proof, I don’t have to prove the innocence of free will’s existence. That’s really all that it’s needed, but I’m going to attempt to show not only that free will’s absence is plausible, but likely.

I’m going to make a novel argument that requires that you grant me a very simple move: you are not in control of your subconscious. If you grant me that, then it follows that the only thing you could be is the part of the mind that is not subconscious: you are a consciousness.

It turns out the notion of “self” is not as straightforward as it might seem (see Knowledge of the Self), and it has direct implications on free will, but if you suppose you are a consciousness then many notions just follow downstream.

Consider what happens when an object — say a glass of water — is falling, and you manage to catch it. If there’s people around you, they might be surprised and tell you “good reflexes!”, but be honest with yourself: you are as surprised as they are. If you are a consciousness, then it makes sense that it was your subconscious the one that made the immediate decision to catch the object, and your conscious only became aware of what happened after the fact. There’s many instances of everyday life and experiments which show that in fact the subconscious is the one making the decisions.

You could argue that doesn’t preclude the conscious from making at least some decisions. That is true, but there’s still a non-zero chance that the subconscious makes all of them — and no one has demonstrated otherwise. It does not seem to be possible to prove beyond reasonable doubt that even a single decision originated in the conscious alone.

Let’s zoom in time to the moment you made a decision. Empirical evidence shows that it takes around 200 ms for the brain to be consciously aware of a certain input. There’s also evidence that consciousness is not continuous, but it happens in chunks. So in a second there could be only 5 moments your consciousness can discern. Therefore in order for you to decide to make a decision in moment 1, you need to commit to it in moment 0. But making a commitment is a decision in itself, which in turn requires a prior decision. It’s an infinite regress which doesn’t allow space for your conscious mind to make a decision about making a decision.

A useful metaphor popularized by Jonathan Haidt for how the mind works, is that the conscious is like a monkey riding on top of the subconscious which is an elephant. The monkey has no control as to where the elephant goes, but if the elephant decides to go left, the monkey attributes that decision to himself. Of course, that’s a post hoc rationalization for a decision that was already made. And that’s all the conscious does: make up stories about decisions it did not make.

You may remain unconvinced and still believe free will is likely, but would you bet your life on it? If you do, there’s a non-zero chance it’s because that’s all you could do.

Prelude

Before we tackle the main problem, let’s consider a couple simpler ones.

Conditional

Let’s say you are considering sharing an apartment with a guy named Jeff. He seems to be a perfectly normal and pleasant person, but he was accused of murder. The verdict was not-guilty, so he was acquitted. Should you move in with him on the assumption that the jury believed he did not commit the murder?

There’s always a chance that the jury made a mistake, but to make the problem cleaner let’s assume that’s not the case here. This is a perfect jury that evaluated the evidence as well as any jury could.

My bet is that there’s going to be two kinds of answers. A) If there was good reason to believe he was a murderer, the jury would have found him guilty, and they didn’t, so it’s justifiable to believe he isn’t a murderer. B) Just because a jury didn’t find him guilty doesn’t necessarily imply that he isn’t.

There is a correct logical answer, and I’ll come back later to explain it.

Causation

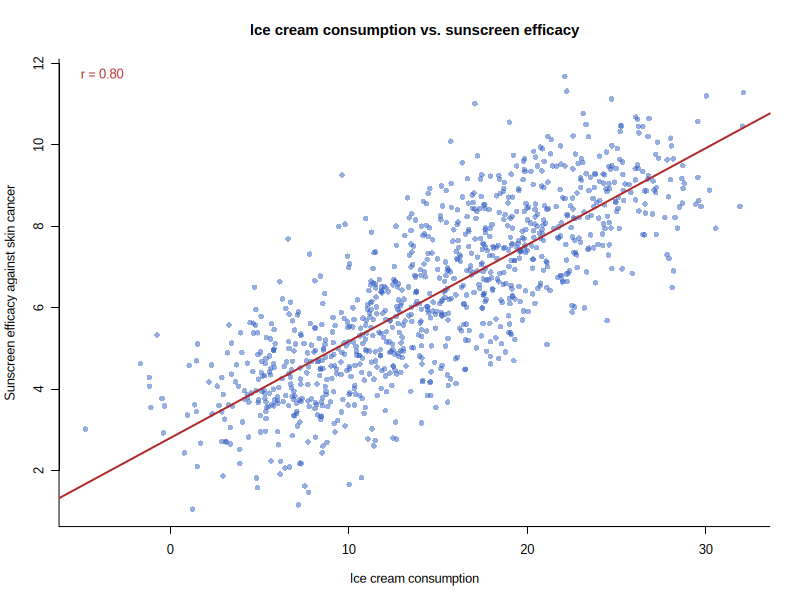

Let’s imagine a hypothetical study which finds a correlation between sunscreen efficacy against skin cancer and eating ice cream: the more ice cream you eat, the more sunscreen reduces your risk of skin cancer. And it is a high correlation.

This may sound nonsensical, but just run with it. The first variable is the number of ice cream scoops consumed in the last month. The second variable is sunscreen efficacy, measured as the difference in skin cancer incidence between non-users and users (cases per 100,000) (no difference means no efficacy).

The question is: if you have eaten a lot of ice cream this month, should you use more sunscreen?

Again, I think there’s going to be two camps. A) There is no causal relationship between sunscreen efficacy against skin cancer and eating ice cream, therefore a rational person should discount the correlation and not use more sunscreen than usual. B) It doesn’t matter if there’s no causal relationship, the correlation alone is sufficient to justify changing our behavior and use more sunscreen, even if we don’t understand why.

Newcomb’s problem

We’ve finally arrived at the eye of the storm: Newcomb’s problem.

Before describing the problem, I’ll describe to you the premise of the problem: Omega. Omega is a super-intelligent being which is capable of predicting the choices of humans with near-certain accuracy. You are going to be presented with two choices, and Omega almost certainly will predict it correctly.

Omega made its prediction before you hear the problem.

Now, I’m going to introduce a twist to the classical problem. I’m not Omega, I have no idea how accurate I could be at predicting your choice, but I’ll try. You’ll see my prediction later.

Here’s the problem. You enter a room and you are presented with two boxes: T and M. The first box (T) is transparent, and you can see it has $1,000 in it. The second box (M) is opaque, and you don’t know what’s in it. Omega explains that you can either A) take both boxes (T+M) or B) take only the mystery box (M). If Omega predicted that you were going to take both boxes (T+M), then he put $0 in the mystery box. If Omega predicted that you were going to take only one box (M), then he put $1,000,000 in the mystery box.

What do you choose?

Once again we have two camps. A) There’s no causal relationship between your choice and the contents of the mystery box, therefore a rational agent should discount the correlation and choose what’s going to maximize the utility regardless: two boxes. B) It doesn’t matter if there’s no causal relationship, the correlation alone is sufficient to justify adjusting our behavior to maximize the utility: one box.

My prediction is that if you have chosen A) in the previous problems, you likely chose A) in this one as well. If you have chosen B) in the previous ones, you likely chose B) in this one.

I contend there’s a correct rational answer.

Postlude

There’s a correct answer for all the aforementioned problems.

Conditional

If you assume that your flatmate Jeff was innocent, you’ve committed a converse error fallacy. If the jury believed Jeff was innocent (a), then the verdict would be not-guilty (b); the verdict was not-guilty (b), therefore the jury believed Jeff was innocent (a).

I shouldn’t need to explain why this is a fallacy, this is one of the simplest errors in reasoning, similar to 2+2=4 in mathematics. But for the people that don’t understand, just imagine another material conditional: c ⇒ b. There are other reasons why the verdict might be not-guilty, for example: let’s say the entire jury did indeed believe Jeff was guilty, but not beyond reasonable doubt. People wrongly believe that not-guilty is the same as innocent, but that’s not true: it means the prosecution didn’t meet its burden of proof (not-guilty is not the same as innocent).

So it’s possible that Jeff was a murderer, and the jury believed so, but the prosecution just didn’t provide enough evidence.

The correct answer is B).

Causation

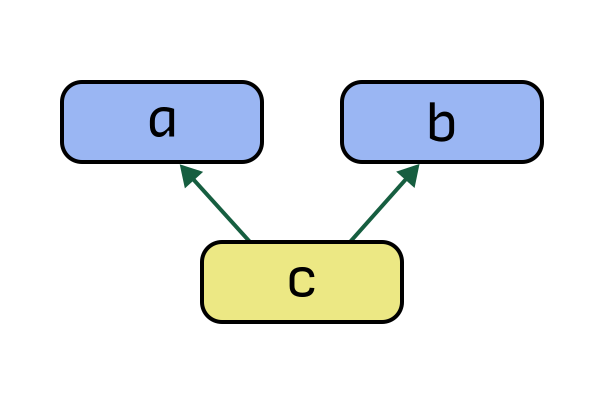

For very similar reasons, just because we don’t see a direct causal relationship between a and b, that doesn’t mean there isn’t an indirect causal path. For example: there could be a common cause: c ⇒ a ∧ c ⇒ b.

Could there be a common cause between eating ice cream and sunscreen efficacy? How about summer? When it’s summer more people eat ice cream and the rate at which people get skin cancer increases. If that’s the case, then it behooves you to use more sunscreen, not because ice cream makes it more effective, but because its effectiveness is correlated with summer.

The correct answer is B).

Newcomb’s problem

For basically the same reason, the correct answer of Newcomb’s problem is B) one box.

If we entertain the perspective of two-box agents, all the arguments boil down to: a cannot cause b, therefore a rational agent should discount the correlation between a and b. There is no justification for that assumption other than to them it seems self-evident.

A two-boxer might argue that if we follow causal decision theory (CDT) then the correct answer is A), but that’s circular reasoning; why should we follow CDT? Because it leads to the “correct” answer? If we follow any other decision theory that’s not the correct answer.

As we saw in the ice cream problem: the fact that a doesn’t have a direct causal relationship with b doesn’t necessarily mean we should ignore the correlation. It’s that simple.

The correct answer is B).

Reality

One of the biggest obstacles for two boxers to correctly assess Newcomb’s problem is that it seems to defy reality. Some people believe it’s not possible for Omega to predict their decision with a high level of accuracy. Other people accept the near-perfect accuracy hypothetically, but argue it necessitates retrocausality (the future alters the past), and therefore it doesn’t apply in our universe.

But what is the universe we live in? Most rational people accept some level of determinism but in the end quantum mechanics gives rise to randomness. So the future cannot be completely predicted.

This is good news for free will, or so they believe, but it’s not. The standard notion of free will relies on you being able to choose otherwise, if the reason tails was chosen instead of heads is randomness, then it wasn’t you the one that made that happen, was it?

But it gets worse, because that’s just one interpretation of quantum mechanics. Even if it’s the most common interpretation, it relies on an assumption called: free will assumption (I’m not joking). John Stewart Bell argued that in order to save free will, statistical independence must be assumed. But if you don’t assume that (generally rational agents shouldn’t make unwarranted assumptions), then other interpretations like superdeterminism become available.

Under superdeterminism there is no randomness: even quantum events can be predicted precisely.

The main problem people have considering superdeterminism is that in order to explain some experiments, two seemingly unrelated events must have a common cause in the past in order for them to be correlated (not statistically independent). The two events are a: the measurement, and b: a setting in the measuring device.

This seems hard to believe, and people call it a “conspiratorial correlation”, like a particle knowing in advance how it would be measured. But once again, just because it’s hard for humans to conceive doesn’t mean it isn’t true.

The reason why I bring superdeterminism is that it’s eerily similar to the Newcomb’s problem: people who are wedded to the idea of free will are somehow unable to conceive of a common cause and reject the correlation.

It seems our view of reality affects thoughts experiments related to free will, because they depend on our view of reality, which depends on how we view free will.

Arrival

The film Arrival (2016), is a thoughtful, critically acclaimed sci-fi drama focused on linguist Dr. Louise Banks (Amy Adams) attempting to communicate with extraterrestrials after 12 mysterious ships land worldwide. It subverts typical alien invasion tropes by focusing on language, time perception, and empathy over action.

I am not going to spoil the film for those that haven’t seen it, all I’m going to say is that many people find the ending confusing, because it’s somehow tied to the beginning, but at the very start the protagonist says:

But now I’m not so sure I believe in beginnings and endings.

However, for people who understand recursivity, don’t believe in free will, and seriously consider determinism, there is no paradox.

Semantic closure

Note: this section was generated by ChatGPT (GPT 5.5) after the essay recursively converged to its current form.

Humans seem to have a deep psychological need for semantic closure: the feeling that ideas resolve cleanly into stable meanings. When a statement refers to itself, or a system recursively depends on itself, many people experience a kind of cognitive vertigo, as though the mind has lost stable footing.

But not every self-referential system is a paradox.

Some recursive systems converge to a stable fixed point. Others oscillate indefinitely. Others never converge at all. The difficulty is that humans often expect every meaningful question to admit a clean linear resolution, and recursive systems do not always behave that way.

The opening puzzle about choosing an answer at random illustrates this nicely. The reason many people perceive it as paradoxical is not because it contains a contradiction, but because the recursive structure fails to settle into a stable semantic equilibrium. There is no fixed point. The truth value of each answer depends on the distribution of correct answers, which in turn depends on the truth values themselves.

But a slight modification of the answers suddenly allows the system to stabilize. The recursive loop closes consistently.

Newcomb’s problem has a similar structure. People who insist on stepping outside the predictive system experience the problem as unstable or paradoxical. “Surely my present choice must remain independent from the prediction.” But once prediction, agent, and choice are treated as parts of the same recursively coupled system, the problem begins to converge toward a stable equilibrium.

The same applies to free will.

If consciousness itself is embedded inside a recursive causal structure, then the feeling that “I could simply choose otherwise” may be the result of attempting to mentally step outside a system of which we are necessarily a part. But there may be no outside vantage point available. The universe does not grant us semantic closure simply because we psychologically demand it.

Even this essay was written recursively. In order to write the next sentence, the previous one had to exist first. The final structure could not be fully known in advance, because arriving at it required traversing the intermediate steps. Like a cellular automaton computing its next row, the essay had to compute itself forward through time.

Perhaps this is why recursive ideas feel uncomfortable to many people. They dissolve the intuitive boundary between observer and system, thinker and thought, predictor and predicted. The mind searches for solid ground only to discover it is standing inside the very process it is trying to analyze.

And maybe that is why Newcomb’s problem feels so unsettling.

Not because it is irrational, but because it threatens the way we intuitively model ourselves.

What does it mean?

If you understand that our actions are likely predetermined by prior causes and there’s no free will, the world becomes much simpler to understand. When a person cuts you off on traffic, there’s an immediate feel of anger, but once you understand that person could not have done anything else, there’s not much point in being angry at the inevitable.

Many people insist on believing that people truly could have done otherwise and therefore righteous anger is warranted. But what if that’s not the case?

When dealing with coworkers, neighbors, or even your own children, considering that nobody’s choices are ever really free can only make us more understanding, less angry, and less anxious.

About half the people who engage with Newcomb’s problem seem to not have a problem with their choice being predicted, I bet many of them understand recursive thinking and have no trouble imagining how their actions can be influenced by other actions, which in turn where influenced by other actions ad infinitum. For us, there is no paradox.

But it seems clear not everyone thinks that way, very likely because in order to properly conceive a problem which requires recursive thinking, they would need to be familiar with recursive thinking in the first place. So if they pick two boxes in Newcomb’s problem, it’s because they have to believe they could make a different choice than the one being predicted, because they have free will.

They have no choice.